Local governments must be proactive about AI revolution

By KATE COIL

With talk of artificial intelligence dominating much of the conversation around tech, local governments of all shapes and sizes are weighing the risks and benefits of employing AI to improve services, streamline data, and engage with the public.

TML Preferred Technology Partner VC3 recently hosted the webinar “Embracing the Future: AI’s Influence on Local Government” to discuss how artificial intelligence works, how local governments are already using AI, and what policies local governments may want to adopt concerning data privacy.

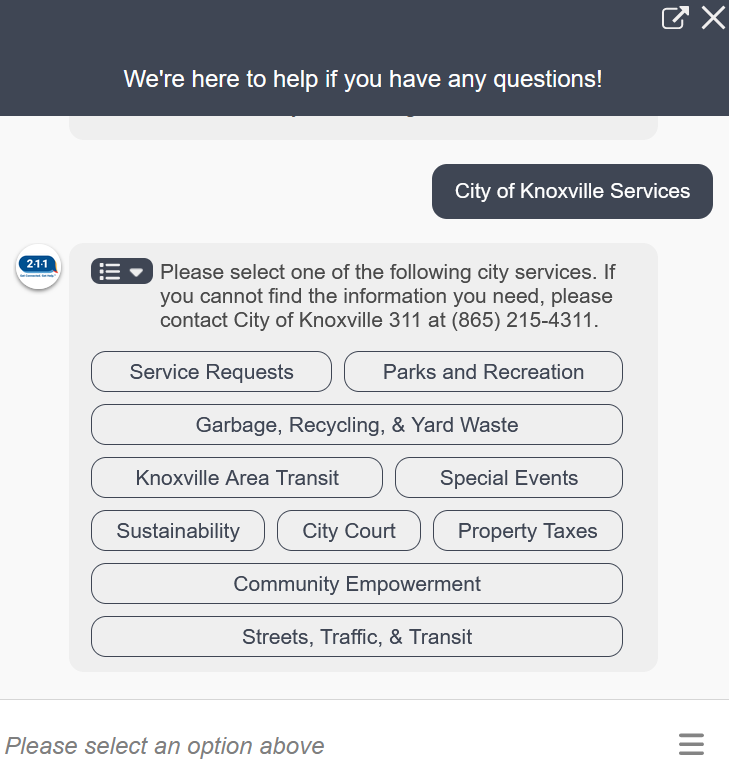

Kevin Benson, director of applications development for VC3, said many municipalities or their employees are already using AI in ways they may not anticipate, such as having a chatbot pop up on a municipal website or Facebook page to answer frequently asked questions, autocorrect and autosuggest features, and spam filters in email.

“Until now, we have all considered ourselves unique in our ability to create something new, to create something that didn’t exist before,” Benson said. “What AI is doing now is filing tax returns, replying to emails, and making music. It is amazing what is going on in the artificial intelligence space.”

With AI already in use, Benson said cities need to take time to consider both how they can use this technology and what parameters and policies they want to put in place for employees using AI in the office.

AI AND DECISION-MAKING

Benson said AI is defined as a branch of computer science that uses a large amount of data to predict patterns. Humans often make decisions based on information and knowledge, past experiences, and their emotions.

“In order for me to make a decision as a human, there is some data behind it, and hopefully that data is accurate,” Benson said. “There is also some gut feeling and emotion that goes into it. Obviously, computers don’t have emotion. We use data to help us make decisions every single day. Computers are really doing the same thing we are doing, and they are really able to understand data.”

Many companies and individuals have used AI to make data-driven decisions in the hopes of providing more relevant and accurate determinations. The more data available and the higher quality that data, the better decisions can be made.

By 2026, 80% of companies will have AI incorporated into their business with an expected 37% growth rate in AI predicted between 2023 and 2030. However, 75% of consumers are concerned about AI and misinformation.

Benson used the example of a librarian and a researcher to explain the difference between using a search engine and the use of AI. A search engine, like a librarian, can easily retrieve, catalog, classify, organize, manage, and provide information, but the user must do the research themselves. AI is more like a researcher, solving problems, predicting trends, collecting organization, analyzing, and interpreting data.

Computer or machine learning AI is when a computer recognizes patterns and starts to learn how to do so without being programmed. This type of AI is often used in content recommendations, credit card fraud detectors, and for traffic prediction.

Generative AI or GenAI creates something new like video, audio, text, and 3D models. This model learns patterns from existing data and then uses that knowledge to general highly-realistic and complex content. Examples of this include the text generating program ChatGPT and the visual-generator DALL-E.

RISK AND REWARDS

Like with any technology, there are inherent risks to using AI. As much of the information AI systems are mining comes from humans, Benson said innate biases in data may lead to AI generating biased results.

There is also a concern around the lack of transparency some AI uses where it is unclear to users where the data sources are coming from or how the program is making its decisions. The security of AI models against cyber attack has also been a concern.

“There is concern that AI is vulnerable to attack where they can manipulate data to deceive the model,” Benson said. “What if you were on Amazon and a hacker was able to get into the site and change the review system so no one star reviews would appear. That would change your decision. If a malicious actor can manipulate the model, that is not necessarily clear to us.”

AI also raises concerns about privacy concerns, especially with data mining.

“When you put information into an AI tool, that information becomes part of its data set,” Benson said. “I would never, and I would encourage you to never, put any personal information or sensitive information into that tool. Anything you wouldn’t put on a billboard you should not put into this tool. Even if you are dealing with a very specific issue, do not use names, emails, or any information that can be tracked back to you or a private individual.”

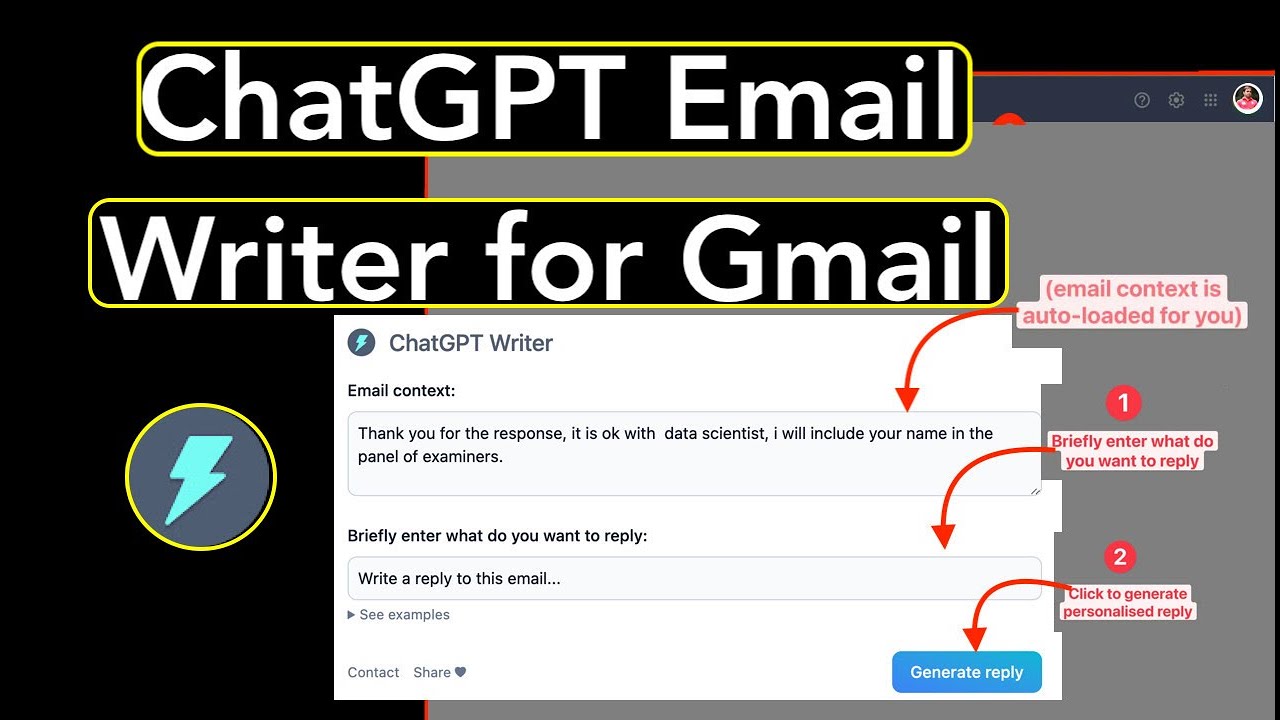

Some city employees may already be using AI features to help write a response email to a concerned citizen or create a presentation to their city board. Benson said it is helpful to proactively educate employees about how to safely navigate AI and to set a policy about how, where, when, and why employees can use the technology.

“You may not know it, but you may have people in your organization using these tools every single day,” he said. “They may not be up front about it. You need to set some governance and some messaging before your data gets exposed by someone who has not been told not to use that information before there is a security breach. I was talking about AI in a team meeting, and a recent hire informed me they had used Chat GPT to write their cover letter. Even if you are not pushing AI right now, I bet there are people in your organization using it.”

Benson said AI generation shouldn’t be the end result but rather a point from which the project starts.

“AI is not giving us a result, it is giving us a starting point,” he said. “You have to understand it, look through it, and challenge it. There needs to be a fair amount of caution when you use this technology. I would really challenge us to think of this as an assistant to help you get a result that you thought of rather than a blanketly trust. If someone doesn’t really seem right, you have to challenge it. I would never copy paste anything I got from AI without reading it, challenging it, and verifying it yourself.”

LOCAL GOVERNMENT AND AI

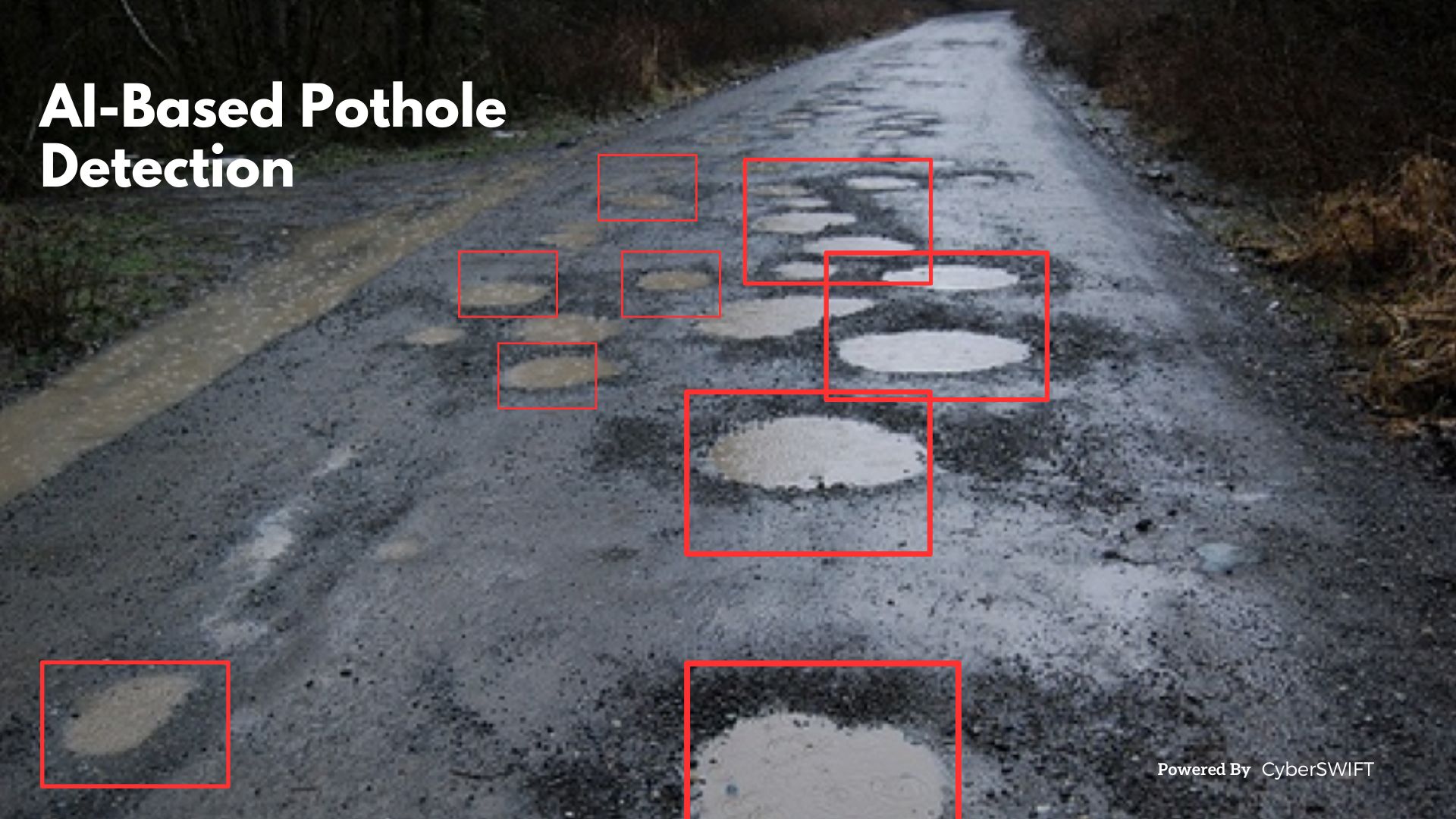

There are many ways local governments can use AI, and many already are without even knowing it. Benson said being rerouted on Google maps due to a road accident is one way AI is being used and an example of how cities themselves can use AI for their own purposes.

Data collected for Google Maps includes historic traffic data, current traffic data, weather conditions, road quality, and even mentions of accidents on social media to better predict arrival time. Benson said many of this same data can also be used by cities.

AI can use data such as trash volume, location, collection times, weather forecasts, and road conditions to help a city’s public works department optimize waste collection routes to save both time and fuel.

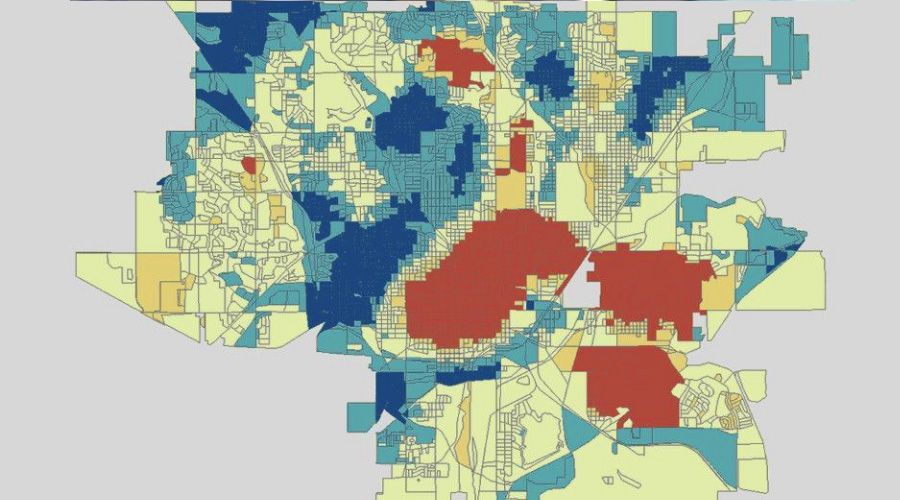

Municipal planners have used data regarding population growth, housing development, transportation, and traffic data to make models of where their cities are growing and how to best target areas they want to grow.

AI can also be used for citizen engagement from automated complaint systems through 311 that can help determine where the majority of complaints are coming from in the city so the municipality can proactively address issues in that area such as road conditions, litter, and crime.